The intersection of deep learning and 3D graphics rendering is a rapidly evolving area of research and application. Traditional rendering techniques, while effective, often require extensive computational resources and can be time-consuming. Deep learning offers innovative approaches that can enhance and accelerate the rendering process, making it possible to achieve photorealistic results more efficiently. This article explores the potential of deep learning in 3D graphics rendering, examining its applications, benefits, challenges, and future implications.

1. Understanding 3D Graphics Rendering

1.1 What is 3D Graphics Rendering?

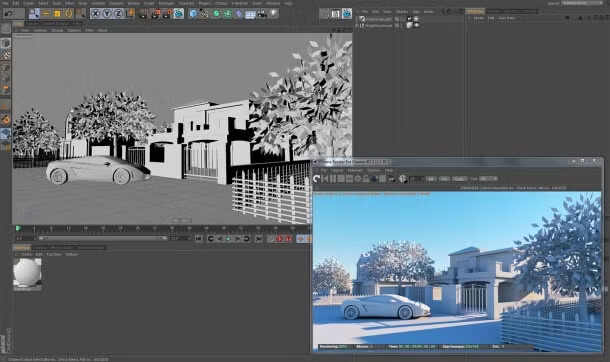

3D graphics rendering is the process of converting 3D models into 2D images. This involves simulating the interaction of light with surfaces to create lifelike representations. Rendering techniques can be broadly categorized into two types: real-time rendering and offline rendering.

- Real-Time Rendering: Used in applications like video games and virtual reality, real-time rendering prioritizes speed to ensure smooth user experiences. Techniques like rasterization are commonly employed to achieve quick results.

- Offline Rendering: Used in film production and architectural visualization, offline rendering prioritizes image quality and realism over speed. Ray tracing and global illumination techniques are often used to simulate realistic lighting effects.

1.2 Traditional Rendering Techniques

Traditional rendering techniques rely on complex algorithms to simulate light behavior. Some of the most common techniques include:

- Rasterization: Converts 3D models into 2D images by projecting vertices onto a screen. It is highly efficient but may struggle with accurately simulating complex light interactions.

- Ray Tracing: Traces the path of light rays as they interact with objects in a scene. This method produces realistic images by simulating reflections, refractions, and shadows, but it is computationally intensive.

- Global Illumination: Simulates the indirect lighting that occurs when light bounces off surfaces. Techniques like radiosity and photon mapping are used to achieve realistic lighting effects.

2. The Role of Deep Learning in 3D Graphics Rendering

2.1 Deep Learning Overview

Deep learning, a subset of machine learning, involves training neural networks on large datasets to learn complex patterns and representations. Convolutional Neural Networks (CNNs) are particularly effective for image-related tasks, making them suitable for applications in graphics rendering.

2.2 Potential Applications in Rendering

Deep learning can be applied to various aspects of 3D graphics rendering:

- Image-Based Rendering: Deep learning can enhance image-based rendering techniques by predicting and generating missing pixels or elements in a scene based on learned patterns from training data.

- Denoising: Deep learning models can be trained to remove noise from rendered images, improving the quality of results from ray tracing and other methods. This process is known as neural denoising.

- Texture Synthesis: Neural networks can generate high-quality textures from low-resolution images, enabling artists to create detailed surfaces without extensive manual work.

- Scene Understanding: Deep learning can be used to analyze and understand scenes, enabling more intelligent rendering decisions based on the content and context of the scene.

- Style Transfer: Techniques like neural style transfer can be applied to render scenes in artistic styles, allowing for creative expression in 3D graphics.

3. Benefits of Using Deep Learning in Rendering

3.1 Enhanced Efficiency

Deep learning can significantly speed up rendering processes by automating tasks that typically require manual intervention. For example, neural networks can quickly denoise images, reducing the time spent on post-processing.

3.2 Improved Quality

By leveraging large datasets, deep learning models can generate high-quality images that rival traditional rendering methods. This capability allows for the creation of photorealistic visuals without the extensive computational overhead.

3.3 Real-Time Capabilities

Deep learning techniques can enable real-time rendering in applications where speed is crucial. By optimizing tasks like denoising and texture synthesis, these methods can facilitate smoother experiences in gaming and virtual reality.

3.4 Creative Possibilities

Deep learning opens new avenues for creativity in 3D graphics. Artists can use neural networks to explore novel styles and techniques, pushing the boundaries of traditional rendering.

4. Challenges in Implementing Deep Learning in Rendering

4.1 Data Requirements

Training deep learning models requires large and diverse datasets. Gathering and curating these datasets can be challenging, especially for specific applications in rendering.

4.2 Computational Resources

While deep learning can enhance rendering processes, it also demands significant computational power for training models. This requirement may limit accessibility for smaller studios or independent developers.

4.3 Integration with Existing Workflows

Integrating deep learning techniques into established rendering pipelines can be complex. Developers must ensure compatibility with existing tools and processes to maximize the benefits of deep learning.

4.4 Interpretability

Deep learning models are often seen as “black boxes,” making it difficult to understand their decision-making processes. This lack of interpretability can be a concern for artists and developers who want to maintain control over rendering outcomes.

5. Current Research and Developments

5.1 Neural Rendering

Neural rendering is an emerging field that combines traditional rendering techniques with deep learning. Researchers are exploring methods that use neural networks to generate images from 3D models, potentially revolutionizing the rendering process.

5.2 Real-Time Denoising

Recent advancements in neural networks have led to the development of real-time denoising techniques. These methods can effectively reduce noise in images generated by ray tracing, enabling high-quality visuals without lengthy rendering times.

5.3 Texture Generation

Deep learning models are being used to create detailed textures based on low-resolution inputs. This capability allows artists to produce high-quality surfaces without extensive manual work, streamlining the texturing process.

5.4 Scene Reconstruction

Researchers are investigating the use of deep learning for scene reconstruction from partial or incomplete data. This approach can facilitate more intelligent rendering decisions and enhance scene understanding.

6. Future Implications

6.1 Advancements in AI Technology

As AI technology continues to advance, the capabilities of deep learning in 3D graphics rendering will expand. Innovations in neural network architectures and training techniques will likely lead to more efficient and powerful rendering solutions.

6.2 Increased Adoption in Industries

The benefits of deep learning will drive its adoption across various industries, including gaming, film, architecture, and virtual reality. As tools become more accessible, more creators will leverage deep learning in their workflows.

6.3 Collaboration Between Artists and AI

The future of 3D graphics rendering may involve greater collaboration between artists and AI. Artists can use deep learning tools to enhance their creative processes, while AI systems can learn from human input to improve their outputs.

6.4 Ethical Considerations

As deep learning becomes more integrated into rendering processes, ethical considerations will arise. Issues related to data privacy, copyright, and the potential for misuse of generated content will need to be addressed.

Conclusion

Deep learning presents exciting opportunities for enhancing 3D graphics rendering. By automating tasks, improving quality, and enabling real-time capabilities, deep learning can transform traditional rendering processes. Despite challenges related to data requirements and integration, ongoing research and advancements in AI technology are paving the way for more efficient and creative rendering solutions.

As the field continues to evolve, the collaboration between artists and deep learning systems will likely redefine the landscape of 3D graphics. By embracing these innovations, the industry can unlock new possibilities for artistic expression and technological advancement, ultimately enriching the way we create and experience visual content.